Iceland Soil

This week I have been mostly working on accessing Iceland soil in the ancient DNA lab. The soil was shipped back from Iceland after the trip in 2014. It currently lives in the ancient lab because eventually DNA will be extracted and specific mammalian DNA with specific markers (Horse and a few others) will be amplified. The goal will be to determine what animals were cultivated in the ancient human settlement formerly at the site where the samples were taken.

Today I will follow the sterile lab protocols to take out a very small sample of the soil and bring it to the analytical chem lab to be dried out in an oven, removing all living microbes. The remaining sample will still be usable for DNA extraction, and there will be more than enough left for multiple extractions.

Other than soil, I’m working on using openSCAD to design housing for the sensors that we will 3D print. My first priority is a housing for the TI Nano, followed by the field platform. The Nano needs a case so we can change its orientation 180 degrees and take soil spectra. I would like to get this working soon.

I am making progress on all of the sensor platforms. Kristin has been making a lot of progress on BLE so the sensors should be integrated with Field Day and ready for testing soon.

This is getting really interesting

o On the archeology front, Rannveig found this article about the possibility of a new Viking site being excavated in Newfoundland. They must have stopped along the way from Scandinavia, and Skalanes seems like a nice place for that. Who knows what we’ll find.

o Nic has made some progress with LIDAR, although the gear is proving to be a bit delicate. We’ll probably order a second unit to work with. Here is an early image taken during today’s meeting. The unit is on the round table, on Nic’s screen is the developing point-cloud of the room and contents. If you look very closely you can see people on couches (/very/ closely…)

o We need to sort a magnetometer before long, hopefully Patrick can loan us one.

o On the logistics front we need to make plane reservations fairly soon. All the lodging and transportation is sorted modulo the ferry to Grimsey, we’ll just wing that early in the morning of the day we travel.

o I started working with our Yocto altimeter recently, we’ll use this as part of the kit that provides more accurate x, y, and z geo-coordinates than consumer grade GPS chipsets alone do.

o Kristin and I worked through most of the details of the interface between FieldDay and the Arduino based sensor platforms. Here is a schematic of it, off to the left is the Postgres database where FieldDay pushes readings in CSV form.

o I think it’s time we started the Iceland16 playlist, it looks like Spotify is currently the most popular platform for doing so.

BLE in Arduino and Android

I have accomplished quite a bit within the past week, if I do say so myself. Last week, I said that I had begun working on the BLE part of Field Day. Well, after some struggle I finished it! I am able to send a request to the Arduino device and upon receiving that request, the Arduino device sends a message back to the android device and writes to he screen (woo!). But the code is not pretty. Android provides a Bluetooth Low Service example in Android Studio, but it uses deprecated code. I researched and was able to modernize it.

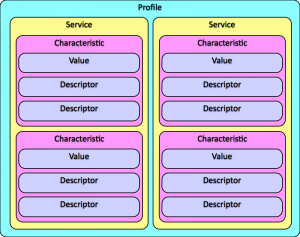

Bluetooth Low Energy is pretty complicated as a service. It uses something called GATT (Generic Attribute Profile). The bluetooth server (arduino device) has a GATT profile and within that GATT profile there are services and services have characteristics. Each service can have multiple characteristics, but there can only be one TX and one RX (read and write) characteristic per service. I learned this the hard way. You can see a diagram of what I’m talking about below.

For the arduino side, we use Red Bear Labs BLE shield. There is a standard BLE library for the arduino but it’s really complex. I’ve read over the code multiple times and I’m just now grasping a little bit of it. RBL has constructed a wrapper for that code. They broke it down into 10 or so different functions, which I must say is mighty useful. I used that when constructing the Arduino code.

During my coding of the BLE on android I discovered that most of our Fragments of sensors are all the same except for the names of them. After talking with Charlie we’ve decided that we’re going to get rid of the individual fragments for each sensors and just have one that is ‘Bluetooth Sensors.’ I’m working on moving the BLE code to that setup now. Hopefully I will be done with it within the day.

Lat Long Database Function Progress

To help with the bounding box interface and further data clean-up, I’m working on a function in PSQL that takes two lat long pairs and calculates the distance between them. I was able to get the function working, but before writing the update that inserts a column in the readings table with values, I want to make sure I know how to read the results my function is giving me right now.

A first degree approximation of the values my function gives me shows me that it isn’t returning values in km or miles right now. We definitely want this function to populate the table with a value that can be easily Q&A’ed( miles vs km anyone?) so I’m now going back and looking over the math of how the function works to get it to return an easily usable value.

After I have that figured out, the cleaning that Charlie will help me do that has to do with populating sectors can begin.

LIDAR or: how I learned to stop reason and love a challenge

So, LIDAR or light-based-radar availability and price range has dropped considerably over the years to the point of being relatively cheap even. So we jumped at that!

I spent the last bit of time jumping into working with LIDAR. The lidar unit model is capable of 10hz which translates to 2000 samples per second. I initially got the laser to work using a visualisation program known as RVIZ which is part of the Robot Operating System (ROS). ROS is sort of the de-facto open source robot/machine learning system… that being said it is brittle and with very little documentation for use outside of getting set up. I initially tried to get ROS running on my native mac OSX environment but ran into complications running on El Capitan, so ill put that off for a later date.

Through an ubuntu virtual machine (with much trial and error) I finally got a laser point visualisation, which you can see in the video below. What you are looking at is a college student in his natural habitat practicing for his entrance into the ministry of silly walks.

Some time after getting this working, the LIDAR took a small tumble off a desk and stopped working. For a total of 3 days that same college student frantically took the device apart, wiggled a few of the connections and re-aligned the laser sensor with the laser beam using a 3rd party laser source. After that it started working correctly again.

The next step is to get the laser vis into a simultaneous localization and mapping (SLAM) program.

Handlers handling handles

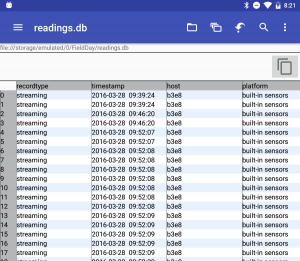

After some testing of the Handler implementation in Field Day, I’ve determined that it is actually working! I was finally able to look at the data that I had been writing to the database.

Databases in Android are stored in internal (private) memory. There are Android apps that let you look at the files on your device, but only for external storage. The internal storage is private. What I ended up doing was adding a function to write the database from internal storage to external storage. Once it was on external storage, I used a SQLite Viewer Android app to look at the database and it looks good!

I did some research into the application state when the device screen is turned off. In our old application, Seshat, the activities would die when the screen was off. That’s not good. We want to be able to turn off the screen and hang the Android device from our person some how. It’s useful to have our hands free. After a couple hours of research, I determined the libraries we want to look at are in the PowerManager library on Android, particularly the PARTIAL_WAKE_LOCK state. I wrap the methods I want to happen when the screen is off in what are essentially ‘start’ and ‘stop’ methods. The methods were not executing with the screen off, so I researched some more. I think what will ultimately happen is switching the SensorSampleActivity to a Service. A Service in Android is one of the top priority processes. It’s one of the last to die to if the device needs more memory. It can continue running even if the Activity the started it has died. Since the Activity is now working, I’m going to hold off in converting it to a Service.

The last thing I worked on this week was Bluetooth LE in Android. Tara gave me a BLE shield with an Arduino attached to test my code with those devices. Android provides a BLE example in Android Studio so I’ve been working with that. However, the example they provide has deprecated code so I’m working on modifying it to the new setup. It’s not working yet, but I’ve only been working on it for a couple of hours!

A Continuation of GIS

I did some further investigation into the GIS layers that we now have. I found out some really useful information, however we need some more direction from Oli in order to be more efficient and productive in putting layers together and figuring out what is useful. I did run some conversions from vector to raster however the files were really large so I am going to try to use another tool for this conversion. Here is part of the email I sent to Oli and Rannveig:

- There are three layer in archaeology that look similar to the map 2007 Rannveig sent. There is not any information however attached in the attribute table or just in the description.

- Iceland layers- all pretty straightforward and informative.

- Lupin layers

- Eider layers

- Terns- lots of layers with information collection of location?

Databases, and lat long functions

Since my last post was a a very long time ago, this is what has happened with the data clean-up over the past weeks:

Eamon and I both cleaned up all the data we could find individually. Since we’d worked separately, when we compared the cleaned data sets that we each had, they turned out to be different. Neither of us had all the data individually, but when our data sets were combined, the list was exhaustive of all permutations of Iceland 2014 and Nicaragua 2014 data. Then, with Charlie’s help, we were able to determine which of the data sets we needed to zorch. It turns out that a significant chunk of our data sets needed to be zorched, because we each had thousands of rows of testing data or data taken in the car while the group was driving.

After much too much time wrestling data in spreadsheets, the readings table is finally in the field science database where further clean-up relating to sectors and spots can be done. As of right now, most Iceland 2014 data has no sector or spot data. With Charlie’s help, I can now populate the sectors.This should be way quicker to do by date, time stamp and lat long coordinates now that we have it all in the database.

Next up, I will be working to create/adapt a function that measures the distance between a pair of lat long coordinates. This should help with further clean-up and with the bounding box interface.

There’s only 28 thousand days…

Actually it’s only about 80 days until we leave but Alicia Keys’ song is stuck in my head after hearing it this morning when we were working in the lab. I do know the answer to her second question, Iceland.

Eamon and Deeksha are making progress on the data, data model, and viz tool. We are going to create a function that measures the distance between two lat,lon pairs to make annotating the site information easier and to provide a hook for the bounding-box interface. We wire-framed the viz tool UI, Eamon is going to construct a message to Patrick about open map tiles that we can cache on our devices.

Erin is chugging along with QGIS, we owe Patrick an adult beverage for suggesting that we use it. Nic recently discovered that there is a plug-in for it that supports LIDAR generated point-clouds in a layer (as a layer?).

I ran into Kelly Gaither (chair of XSEDE16) at IU on Friday, she strongly encouraged us to produce a poster for XSEDE16 on the UAS + LIDAR + machine learning package. She thinks it’s quite unique. Unfortunately she did remember me from the conference last summer when I hid under the table during the awards ceremony.

Next up for me is working on the high resolution Z platform and the soil temperature platform. I would like to work on Field Day too.